Comparison of State-of-the-Art and Traditional Networks for Image Segmentation on the ISPRS Potsdam Dataset

Authors

- Menghua, Xie

- Leung, Yiu Chung

Introduction

This project focuses on deep learning methods and provides scripts and notebooks that help build, train, and analyze models efficiently. The structure includes various components such as configuration settings, model creation tools, and utilities for model training and visualization.

Dataset

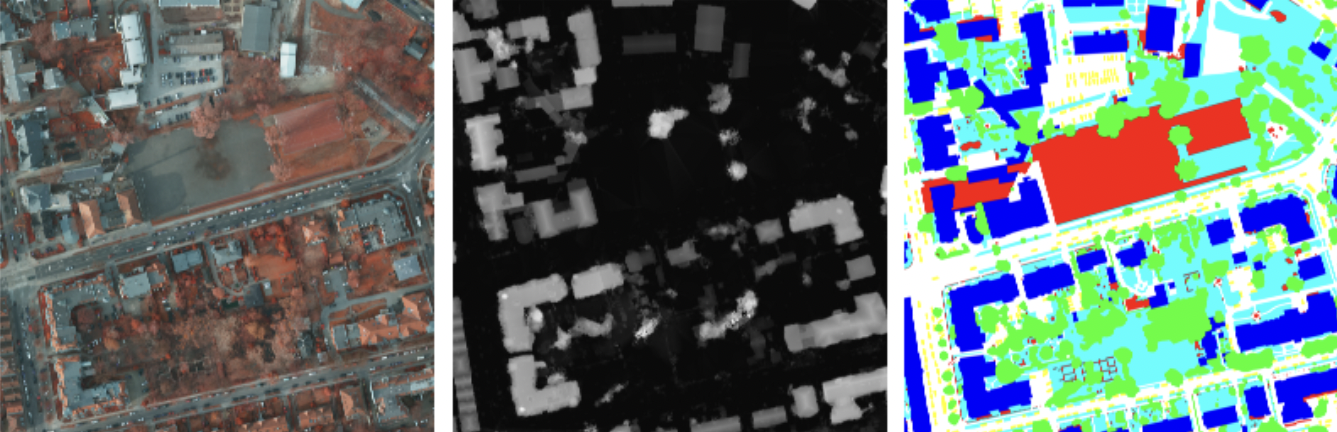

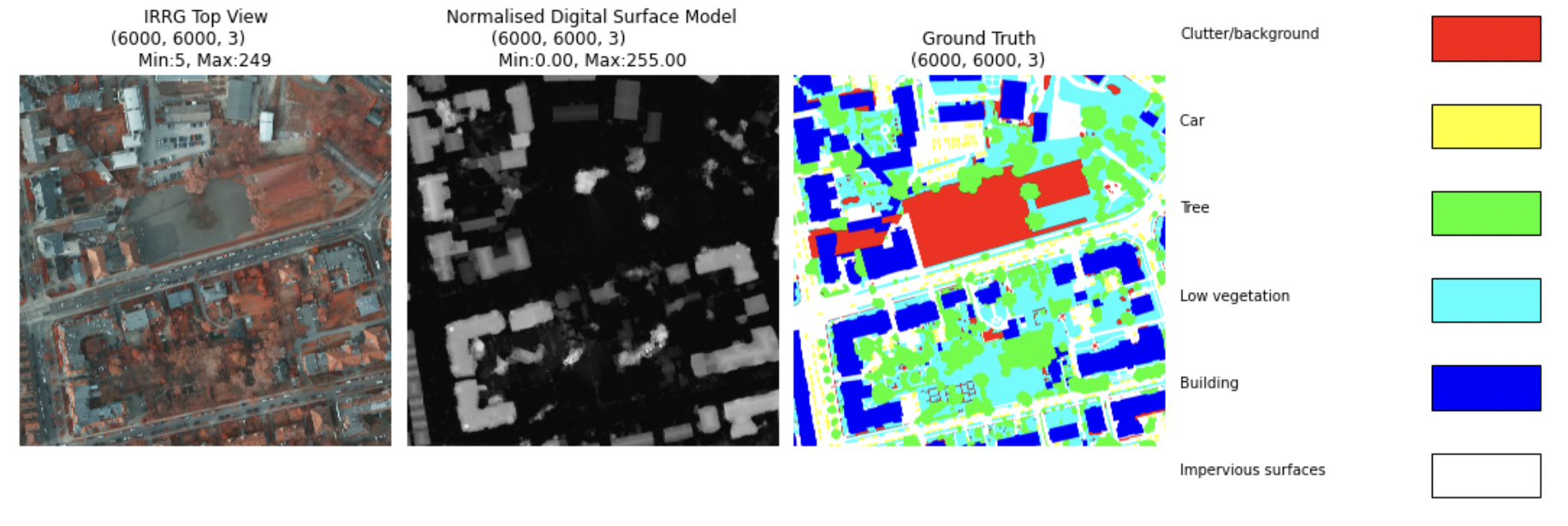

ISPRS Postdam Dataset

this dataset include 37 pictures per type(IRRG, Digital surface model, label).

each image 600060003

Model(s)

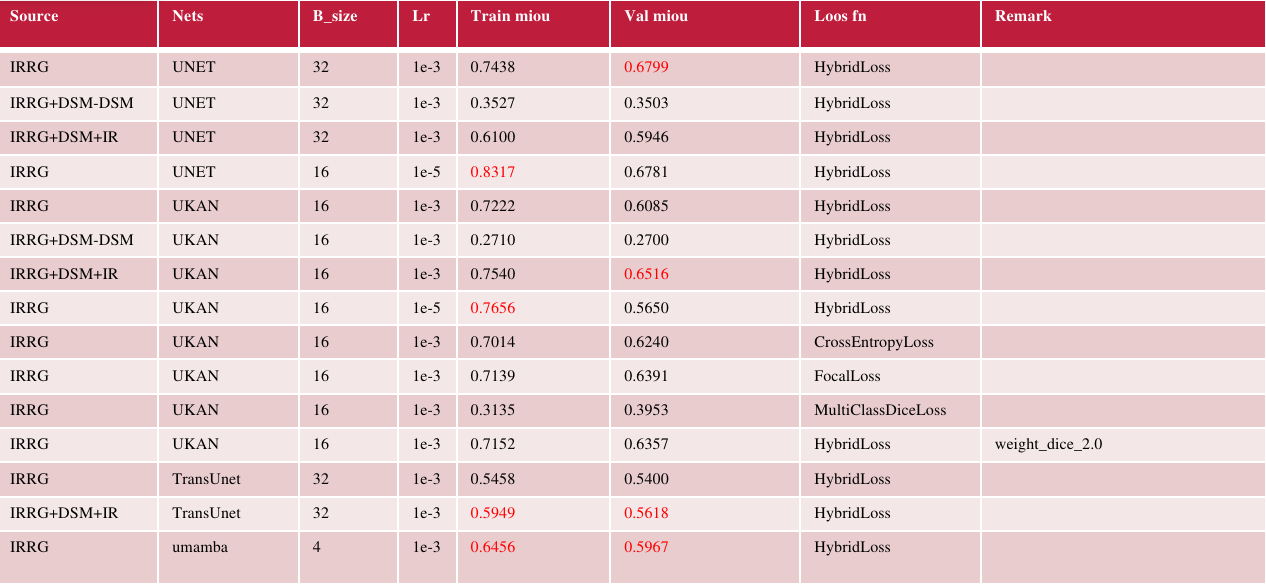

we used unet transunet, umamba and ukan as the figure showing.

Results

Regarding the effects of the sources of data No improvement for adding DSM for training Unet Noticeable improvement for large network, e.g. UKan, TransUnet

Regarding loss functions The experiments in UKan showed that there is no significant difference between HybridLoss, CrossEntropy, FocalLoss The failure in MultiClassDiceLoss might be due to the class imbalance in the dataset

Regarding the effects of learning rate For UKan & Unet, there is a significant improvement in training miou but not in validation miou A sign for overfitting, 1e-3 should be a better learning rate